Derivatives – Serlo

The derivative is one of the central concepts within calculus. For a given function , the derivative is another function which specifies the rate of change of in . It is used in various scientific disciplines, basically everywhere, where there is a "rate of change" within a dynamical system. Knowing about derivatives means having a powerful tool at hand: it allows you to describe and predict rates of change in a huge variety of applications.

Intuitions of the derivative

[Bearbeiten]Te derivative is a mathematical object, which becomes useful in many situations. Depending on the situation, there are several intuitions which can make this abstract object come alive in your mind:

- Derivative as instantaneous rate of change: The derivative corresponds to what we intuitively understand as the rate of change of a function at some instant . A rate of change () describes how much a quantity changes () in relation to the change of some reference quantity (). If we let () run to 0, we get the rate of change within an "infinitely small amount of time". An example are speeds: Consider a given time-dependent position , i.e. the function is re-labeld as and is re-labelled as . The quotient of "travelled distance" and "elapsed time" just describes the "average speed". In order to get the speed at some time , we make the time difference smaller and smaller, such that the "average speed" goes over to an "instantaneous speed" . This is called first derivative and mathematicians write .

- Derivative as tangent slope: The derivative corresponds to the slope that the tangent of the graph has at the location of the derivative. Thus the derivative solves the geometric problem of determining the tangent to a graph by a point.

- Derivative as slope of the locally best linear approximation: Any function that has a derivative a point can be well approximated by a linear function in an environment around this point. The derivative corresponds to the slope of this linear function. This is useful if the function is hard to compute: the linear approximation can be computed way easier in many cases.

- Derivative as generalised slope: How steep is a given function? At first, the concept of the "slope of a function" is only defined for linear functions. But we can use the derivative to define the "slope" also for non-linear functions.

We will discuss these intuitions in detail in the following and use them to derive a formal definition of the derivative. We will also see that derivable functions are "kink-free", which is why they are also called smooth functions (think of smoothly bending some dough or tissue).

Derivative as rate of change

[Bearbeiten]Introduction to the derivative

[Bearbeiten]The derivative corresponds to the rate of change of a function . How can this rate of change of a function be determined or defined? Let, for example be a real-valued function, which has the following graph:

For example, may describe a physical quantity in relation to another quantity. For example, could correspond to the distance covered by an object at the time . could also be the air pressure at the altitude or the population size of a species at the time . Now let us take the argument , where the function has the function value :

Let us assume that is the distance travelled by a car at the time . Then the current rate of change of at the position is equal to the velocity of the car at the time .

It is hard to determine the velocity directly with only given. But we can estimate it. We take a point in time shortly after and look at the average speed in that time . The distance travelled in that time is , while the time difference is . Thus the car has the average speed

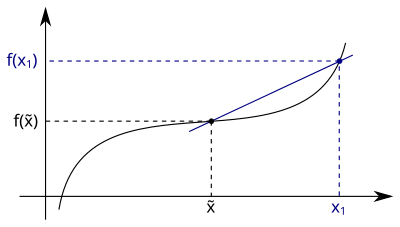

This quotient, which indicates the average rate of change of the function in the interval , is called difference quotient. As its name suggests, it is a quotient of two differences. In the following figure we see that this difference quotient is equal to the slope of the secant passing through the points and :

This average speed is a good approximation of the current speed of our car at the time . It is only an approximation since the movement of the car between and need not be uniform - it can accelerate or decelerate. But we should get a better result if we shorten the period for calculating the average speed. So let's look at a time which is even closer to and determine the average speed for the new time interval between and :

We can shorten the time difference even further by taking a sequence of times which converge towards . For every we calculate the average speed of the car in the period from to . The shorter , the less the car should be able to accelerate or decelerate in this period of time. So the average speed should converge to the current speed of the car at time :

Thus we have found a method to determine the current rate of change of at time : We take any sequence of arguments , which are all different from and for which . For every we determine the quotient . The current rate of change is the limit of these quotients:

The derivative or at is denoted as . So we have the mathematical definition:

The limit of the difference quotient is sometimes also called differential quotient.

Negative time intervals

[Bearbeiten]What happens if we do not choose in the future, but in the past of ? Let us draw this situation in a picture:

The average speed in the interval from to is then equal to . If we extend this fraction by a factor of , we get

We get the same term as in the previous section. This gives the average speed, no matter if or . Thus, in the case of a negative time interval with the average speed should also be close to the current speed of the car at the time , if is only sufficiently close to . There is

where is any sequence of different from with . The sequence elements of can sometimes be larger and sometimes smaller than depending on the index :

Refining the definition

[Bearbeiten]Let now be a real-valued function and let . As we have seen above, there is

where is a sequence of arguments different from which converges to . In order to have at least one such sequence of arguments, must be an accumulation point of the domain (an element is an accumulation point of a set exactly when there is a sequence not including that number but converging towards it). This may sound more complicated than it often is. In most cases is an interval and then every is an accumulation point of . For the definition of the differential quotient it should not matter which sequence we choose. Accordingly, we can define the derivative:

Let with and let be an accumulation point of . The function is differentiable at with derivative , if for every sequence of arguments different from and with there is:

We can shorten this definition by using limits for functions. As a reminder: There is according to definition: if and only if for all sequences of arguments non-equal to with . So:

Let with and let be an accumulation point of . The function is differentiable at with derivative , whenever:

The h-method

[Bearbeiten]

There is an equivalent option to define the derivative. For this we go from the differential quotient and perform the substitution . The new variable just describes the difference between and the point where the difference quotient is formed. For , equivalently goes . So we can also define the derivative as follows

Let with and let be an accumulation point of . The function is differentiable at with derivative , whenever:

Applications in science and technology

[Bearbeiten]We have come to know the derivative as the current rate of change of a quantity. As such, it occurs frequently in science or applications. Several variables are defined as rates of change, for example:

- velocity: The velocity is the instantaneous rate of change of the distance travelled by an object.

- Acceleration: The acceleration is the instantaneous rate of change of the speed of an object.

- Pressure change: Let the air pressure at altitude . The derivative is the rate of change of air pressure with altitude. This example shows that the rate of change need not always be related to time. It can also be the rate of change with respect to another quantity, e.g. altitude.

- Chemical reaction rate: Let's consider a chemical reaction . Let the concentration of the substance at time . The derivative is the instantaneous rate of change of the concentration of and thus indicates how much of the substance is converted into the substance . Thus indicates the chemical reaction rate for the reaction .

- Often the number of individuals in a population is considered (for example the number of people on the planet, the number of bacteria in a Petri dish, the number of animals of a species or the number of atoms of a radioactive substance). The derivative represents the instantaneous rate of change of individuals at the time .

Definitions

[Bearbeiten]Derivative and differentiability

[Bearbeiten]Definition

Let with and let be an accumulation point of . The function is differentiable at with derivative , whenever:

Equivalently, we can require:

A function that can be differentiated at is called differentiable at the position . A function is called differentiable, if the above limit exists at every position within the domain of definition. That means, differentiable functions are differentiable at every point, where they are defined.

Difference quotient and differential quotient

[Bearbeiten]The terms „difference quotient“ and „differential quotient“ are mathematically defined as follows:

Derivative function

[Bearbeiten]

If a function with is differentiable at every point within its domain of definition, then has a derivative at every point in . The function that assigns its derivative to every m argument is called derivative function of :

Definition (Derivative function)

Let be a differentiable function with . We define the derivative function by

If the derivative function is additionally continuous, we call continuously differentiable.

Warning

The terms "continuously differentiable" and "differentiable" are not equivalent. The continuity of the derivative function has to be imposed separately.

Notations

[Bearbeiten]Historically, different notations have been developed to represent the derivative of a function. In this article we have only learned about the notation for the derivative of . It goes back to the mathematician Joseph-Louis Lagrange , who introduced it in 1797. Within this notation the second derivative of is denoted and the -th derivative is denoted .

Isaac Newton - (the founder of differential calculus besides Leibniz) - denoted the first derivative of with , accordingly he denoted the second derivative by . Nowadays this notation is mainly used in physics for the derivative with respect to time.

Gottfried Wilhelm Leibniz introduced for the first derivative of with respect to the variable the notation . This notation is read as "d f over d x of x". The second derivative is then denoted and the -th derivative is written as .

The notation of Leibniz is mathematically speaking not a fraction! The symbols and are called differentials, but in modern calculus (apart from the theory of so-called "differential forms") they have only a symbolic meaning. They are only allowed in this notation as formal differential quotients. Now there are applications of derivatives (like the "chain rule" or "integration by substitution"), in which the differentials or can be handled as if they were ordinary variables and in which one can come to correct solutions. But since there are no differentials in modern calculus, such calculations are not mathematically correct.

The notation or for the first derivative of dates back to Leonhard Euler. In this notation, the second derivative is written as or and the -th derivative as or .

Overview about notations

[Bearbeiten]| Notation of the … | 1st derivative | 2nd derivative | -th derivative |

|---|---|---|---|

| Lagrange | |||

| Newton | |||

| Leibniz | |||

| Euler |

Derivative as tangential slope

[Bearbeiten]

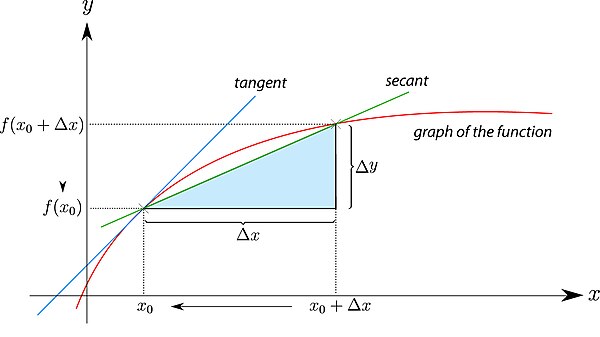

The derivative corresponds to the limit value . The difference quotient is the slope of the secant between the points and . In the case of the boundary value formation , this secant merges into the tangent that touches the graph of at the point :

Damit ist die derivative gleich der Steigung der Tangente am Graphen durch den Punkt . Die derivative kann also genutzt werden, um die Tangente an einem Graphen zu bestimmen. Somit löst sie auch ein geometrisches Problem. Mit kennen wir die Steigung der Tangente and with einen Punkt auf der Tangente. Damit können wir die functionsgleichung dieser Tangente bestimmen.

Thus the derivative is equal to the slope of the tangent to the graph through the point . we may also use the derivative to compute the tangent to a graph. With we know the slope of the tangent. The offset can be determined using that is a point on the tangent. The following question illustrates how this works:

Question: What is the tangent equation if its slope is and it passes through the point ?

The general formula of a linear function is . Where is the slope of and is the intersection of with the y-axis (offset). Now let be the tangent you are looking for. It has slope and therefore .

So we only need to find the offset . Since passes through the point , there is

So

We note: knowing the derivative at a point (and the point itself) suffices for computing the equation of the tangent.

Derivative as characterization of best approximations

[Bearbeiten]Approximating a differentiable function

[Bearbeiten]The derivative can be used to approximate a function. One may even define the derivative as the "best linear approximation" to a function. To find this approximation we start with the definition of the derivative as a limit:

The difference quotient gets arbitrarily close to the derivative , if gets sufficiently close to . For we can write:

In the following we assume, that the expression for " is approximately as large as " is well defined and obeys the common arithmetic laws for equations. So we can change this equation to

If is sufficiently close to , then is approximately equal to . This value can thus be used as an approximation of near the derivative position. The function with the assignment rule is a linear function, since is an arbitrary but fixed point.

The assignment rule describes the tangent, which touches the graph of the function at the position where the derivative is taken. Thus, the tangent near the point of contact is a good approximation of the graph. This is also shown in the following diagram. If one zooms in close enough at a point in a differential function, the graph looks approximately like a straight line:

This line is described by the assignment rule and corresponds to the tangent of the graph at this position.

Example: The sine for small angles

[Bearbeiten]Let's take a look at the above mentioned example. For this we consider the sine function . Its graph is

As we shall see, the derivative of the sine is the cosine and thus

the linear approximation of the sine is hence

In the vicinity of zero, there is . This is the so called small-angle approximation. Thus, can be approximated by . With this approximation is also quite good. The following diagram shows that near zero, the sine function can be described approximately by a line :

The diagram also shows that this approximation is only good near the derivative point. For values far away from zero, differs greatly from . The approximation is therefore only meaningful for small arguments!

Quality of approximations

[Bearbeiten]How good is the approximation ? To answer this, let be the value with

The value is therefore the difference between the difference quotient and the derivative . This difference disappears in the limit , because for this limit the difference quotient turns into a differential quotient, i.e. the derivative . There is also . Now we can rearrange the above equation and get

The error between and is thus equal to the term . Because of there is

So the error disappears for . But we can say even more: decreases faster than a linear term towards zero. Even if we divide by and thus greatly increase this term near , then disappears for . There is

The error in the approximation thus falls off to zero faster than linear for . Let us summarize the previous argumentation in one theorem:

Theorem (Approximation of a differentiable function)

Let and let be an accumulation point of . Let also be differentiable at the point with the derivative . Let and be defined such that for all there is

Then the error term for vanishes, i.e. . For there is accordingly .

Alternative definition of the derivative

[Bearbeiten]The fact that differentiable functions can be approximated by linear functions characterises the derivative. Every function is differentiable at the position , if a real number (best approximation parameter) as well as a function exist, such that that and apply. Its derivative is then . There is

So we can also define the derivative as follows:

Definition (Alternative definition of the derivative)

Let be a function and an accumulation point of . The function is differentiable with the derivative at the point if a function exists, such that

and holds.

Describing derivatives using a continuous function

[Bearbeiten]There is a further characterisation of derivative. We start with the formula

Where is the difference between the difference quotient and the derivative (which disappears for ). If we rearrange this formula we get:

The function for has the property

Thus can be extended to a function which is continuous at the position , whereby the function value is set . This representation of a differentiable function allows a further characterisation of continuous functions:

Theorem (Equivalent characterisation of the derivative)

A function is differentiable in if and only if there is a function continuous in with:

In that case, .

Proof (Equivalent characterisation of the derivative)

Proof step: with with

Let , where is a function with . Now, for there is

We now define . Then we get

So is continuous in with .

Proof step: with with

Let now with a function continuous in , where . For there is then

Now, we define and get

Derivative as generalized slope

[Bearbeiten]

The slope is initially only defined for linear functions with the assignment rule where . For such functions the slope is equal to the value and can be calculated using the difference quotient. For two different arguments and from the domain of definition there is:

Now is also the derivative of at every accumulation point of the domain of definition:

The derivative of a linear function is therefore always equal to its slope. But the derivative is more general: it is defined for all differentiable functions. (Remember: A term is a generalisation of another term , if is the same as in all cases where is defined and can be applied to other cases.)

So we can consider the derivative as the slope of a function at a point. The transition slope derivative thus changes from a global property (the slope for linear functions is defined for the whole function), to a local property (the derivative is the instantaneous rate of change of a function).

Examples

[Bearbeiten]Example of a differentiable function

[Bearbeiten]Example (The square function is differentiable at )

The square function can be differentiated at the position with derivative . We get this result if we evaluate the differential quotient at the position :

The latter expression shows that the difference quotient is equal to for (for the difference quotient is not defined because otherwise we would divide by zero). Now we have to determine the limit value of as :

Thus the derivative of at the position is equal to , i.e. . Analogously, we can determine the derivative of at any position :

Thus the derivative of the square function at the position is equal to . The derivative function of is therefore the function .

Example of a non-differentiable function

[Bearbeiten]Example (Absolute value function is not differentiable)

We consider the absolute value function and check whether it can be differentiated at the position . Here we select the sequences , and with

These all converge to . What are the differential quotients corresponding to those sequences? For there is:

For we get:

For there is:

This limit for the sequence does not exist. We therefore see that depending on the sequence chosen, the limit value is different or does not exist. Thus, according to definition, the limit value does not exist either. So the function cannot be differentiated at the position . The absolute value function has no derivative at zero.

Left-hand and right-hand derivative

[Bearbeiten]Definition

[Bearbeiten]The derivative of a function is the limit of the difference quotient for . The difference quotient can be understood as a function , which is defined for all except for . So is actually the limit value of a function.

The terms "Left-hand and right-hand derivative" can also be considered for the difference quotient. Thus we obtain the terms "left-hand" and "right-hand" derivative. For the left-hand derivative, only secants to the left of the considered point are evaluated. So only difference quotients are considered, where . Then it is checked whether the difference quotient converges to a number in the limit converge against a number. If the answer is yes, then this number is the left-hand derivative at that point:

Here is the notation for the left-hand derivative of at the position . For this limit to make sense, there must be at least one sequence of arguments that converges from the left towards . So has to be an accumulation point of the set .

Definition (Left-hand derivative)

Let be a function and an accumulation point of the set . The number is the left-hand derivative of at the position , if there is

This is equivalent to the statement that for all sequences from with and and there is

Analogously, the right-hand derivative can be defined as follows:

Definition (Right-hand derivative)

Let be a function and an accumulation point of the set . The number is the right-hand derivative of at the position , if there is

This is equivalent to the statement that for all sequences from with and and there is

functions only have a limit value at one position in their domain of definition if both the left-hand and the right-hand limit value exist at this position and both limit values match. We can apply this theorem directly to derivative functions:

A function is differentiable at a position in its domain of definition if and only if both the left-hand and the right-hand derivative exist there and both derivatives coincide.

Example

[Bearbeiten]We have already shown that the absolute value function is not differentiable at . However, we can still show that the right-hand derivative exists at this position and is equal to :

Analogously, we can show that the left-hand derivative is equal to at this position:

Since the right-hand and left-hand derivatives do not coincide, the absolute value function cannot be differentiated at . At this point, it has left-hand and right-hand derivatives, but no general derivative.

Weil die rechtsseitige and die linksseitige derivative nicht übereinstimmen, ist die Betragsfunktion an der Stelle nicht ableitbar. Sie besitzt dort zwar links- and rechtsseitige derivativeen, aber keine derivative.

Differentiable functions do not have kinks

[Bearbeiten]In the above example we have seen that the absolute value function is not differentiable. This is because the absolute value function "has a kink" at the position , so that the left-hand and right-hand derivative are different. If we go to from the left-hand side, the derivative is equal to , while the derivative from the right-hand side is equal to . The kink in the absolute value function thus prevents differentiability.

So if a function has a kink, it is not differentiable at this point. In other words: differentiable functions are kink-free. Therefore they are also called smooth functions (actually, smooth means "infinitely many times differentiable"). This does not mean, however, that kink-free functions are automatically differentiable. As an example, let us consider the sign function with the definition

Its graph is

This function is not differentiable at the zero point , because near the the "jump" of the function, the difference quotient converges towards infinity. For the right-hand derivative there is for example:

The sign function has no kink at the zero point. Instead, it makes a "jump" there.

At the example of the sign function we see that being "free of kinks" and "differentiable" cannot be the same. However, freedom from kinks is a prerequisite for differentiability. So differentiable functions are free of kinks.

Relations between differentiability, continuity and continuous differentiability

[Bearbeiten]Continuous differentiability of a function implies its differentiability, which in turn implies its continuity. The converse statements do not hold, as we will see in the course of this section:

The first implication follows directly from the definition: A function is called continuously differentiable if it is differentiable and the derivative function is continuous. Thus, continuously differentiable functions are also differentiable. The second implication needs some more work:

Differentiable functions are continuous

[Bearbeiten]We now show that every at one point differentiable function is also continuous at this point. Thus, differentiability is a stronger condition for a function than continuity:

Theorem

Let with be a function, that is differentiable at . Then, is also continuous at . Consequently, every differentiable function is continuous.

Proof

Let be any sequence in converging to . Since is differentiable in , there is a function ("approximation error") with , such that for all in we have

In this case, we will also have . Since , we will also have . So there is:

We were allowed to pull the limits apart here because the limits , and exist. According to the sequence definition for continuity, implies that is continuous at .

Alternative proof

Let be a sequence in converging towards and whose sequence elements are not equal to . There is also and for all . Since is differentiable in , there is . The derivative of in the point is a real number. Then, there is:

We were allowed to pull the limits apart here because the limit values and exist. Thus as long as the sequence attains the value at most a finite number of times and holds.

Let now be any sequence in which converges towards and whose sequence elements infinitely often attain the value . In this case, we take the subsequence of with sequence elements unequal to and also obtain the function value limit . The partial sequence of elements is constant and its function values trivially converge to . Thus the sequence can be split into two subsequences, both of which converge towards . So we have .

Hence, for every sequence in which converges towards , there is . So is continuous at the position .

Application: Non-continuous functions are not differentiable

[Bearbeiten]From the previous section we know that every differentiable function is continuous:

Applying the principle of contraposition to this implication, we also get:

Example: Non-continuous functions are not differentiable

[Bearbeiten]Take, as an for example the sign function

It is not continuous at . So it is also not differentiable there. We can prove non-continuity by taking a sequence . This sequence converges towards zero. If the sign function was differentiable, then the limit value would have to exist. However

The limit value does not exist in . Therefore the sign function is - as expected - not differentiable at .

Not every differentiable function is continuously differentiable

[Bearbeiten]In the following example, we already use some derivatives rules, which will be discussed in more detail in the next chapter. Perhaps you already know them from school. If not, they are a useful insight to what will follow.

Example (Example of a differentiable, but not continuously differentiable function)

We will show that the following function is differentiable everywhere, but its derivative function is not continuous:

At , the product and chain rule (which we will derive later) tells us that the function is infinitely often continuously differentiable. However, at there is

So is also differentiable at with derivative . However, the derivative function is not continuous at . To show this, we have to determine the derivative function. For , the product and chain rule yield

Together with the derivative value we get the derivative function

To show the discontinuity of at we use the sequence definition of continuity. Let us take the sequence with . There is . If was continuous, then according to the sequence criterion, should apply. But now

The limit value does not exist, because the sequence has the two accumulation points and . It follows that is not continuous at . Therefore, is differentiable, but not continuously differentiable.

Exercises

[Bearbeiten]Hyperbolic function

[Bearbeiten]Exercise (Hyperbolic function is differentiable at 2)

Show that the hyperbolic function is differentiable at and calculate the derivative there. What is the derivative of at any position ?

Solution (Hyperbolic function is differentiable at 2)

Here is the differential quotient at the position is:

So is differentiable at with the derivative . For a general there is

Root function

[Bearbeiten]Exercise (Root function is not differentiable at 0)

Show that the root function

is not differentiable at .

Solution (Root function is not differentiable at 0)

We must show that the differential quotient of in does not exist. This quotient is

We choose the positive sequence converging to 0. For this sequence there is

Thus there is no limit to the differential quotient . The function is therefore not differentiable at .

Determining limits

[Bearbeiten]Exercise (Determining limits with differential quotients)

Let be differentiable in . Show that the following limits hold:

- Does the reverse statement also hold for the limit value ? I.e. if the limit value exists, then is differentiable at , and is equal to this limit?

Solution (Determining limits with differential quotients)

Solution sub-exercise 1:

Since is differentiable in , there is

If we substitute , then there is . Hence

Solution sub-exercise 2:

Here, we have

Solution sub-exercise 3:

The converse is not true. To show this we consider the function in . For this function we have the limit value

However, the absolute function is not differentiable at 0.

Criterion for differentiability

[Bearbeiten]Exercise (Criterion for differentiability of a general function at zero)

Let . Show: if for some , then is differentiable at 0 with with .

Solution (Criterion for differentiability of a general function at zero)

There is

Since , there is

The squeeze theorem then implies

![{\displaystyle [{\tilde {x}},x_{1}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dc5043777ec906bd72d0ecf9ca136a9489dfe801)

![{\displaystyle [{\tilde {x}},x]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/405c0d85ca3635f5ffb1d59a9b2144c81c4f91cb)

![{\displaystyle {\begin{aligned}&&f'({\tilde {x}})&\approx {\frac {f(x)-f({\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&\implies {}&f'({\tilde {x}})\cdot (x-{\tilde {x}})&\approx f(x)-f({\tilde {x}})\\[0.3em]&\implies {}&f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})&\approx f(x)\\[0.3em]&\implies {}&f(x)&\approx f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/29b0a1732aaaa27ce71c7b12bec4be7b11e15c0c)

![{\displaystyle {\begin{aligned}&&{\frac {f(x)-f({\tilde {x}})}{x-{\tilde {x}}}}&=f'({\tilde {x}})+\epsilon (x)\\[0.3em]&\implies {}&f(x)-f({\tilde {x}})&=f'({\tilde {x}})\cdot (x-{\tilde {x}})+\epsilon (x)\cdot (x-{\tilde {x}})\\[0.3em]&\implies {}&f(x)&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\underbrace {\epsilon (x)\cdot (x-{\tilde {x}})} _{:=\ \delta (x)}\\[0.3em]&\implies {}&f(x)&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\delta (x)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f4c7302ebc33ca2c6af1c4f867476240e332c27b)

![{\displaystyle {\begin{aligned}f(x)&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\epsilon (x)\cdot (x-{\tilde {x}})\\[0.3em]&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\delta (x)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b9cab53ff84835e4c795cb810b094522bcc90d61)

![{\displaystyle {\begin{aligned}f'({\tilde {x}})&=\lim _{x\to {\tilde {x}}}{\frac {f(x)-f({\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&=\lim _{x\to {\tilde {x}}}{\frac {f({\tilde {x}})+c\cdot (x-{\tilde {x}})+\delta (x)-f({\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&=\lim _{x\to {\tilde {x}}}{\frac {c\cdot (x-{\tilde {x}})+\delta (x)}{x-{\tilde {x}}}}\\[0.3em]&=\lim _{x\to {\tilde {x}}}c+\underbrace {\frac {\delta (x)}{x-{\tilde {x}}}} _{\to 0}\\[0.3em]&=c\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/64b3c1a3b7c4d4848448ebecf7fbc6b0566758cd)

![{\displaystyle {\begin{aligned}&&{\frac {f(x)-f({\tilde {x}})}{x-{\tilde {x}}}}&=f'({\tilde {x}})+\epsilon (x)\\[0.3em]&\implies {}&f(x)-f({\tilde {x}})&=f'({\tilde {x}})\cdot (x-{\tilde {x}})+\epsilon (x)\cdot (x-{\tilde {x}})\\[0.3em]&\implies {}&f(x)&=f({\tilde {x}})+(f'({\tilde {x}})+\epsilon (x))\cdot (x-{\tilde {x}})\\[0.3em]&&&\ {\color {Gray}\left\downarrow \ \varphi (x)=f'({\tilde {x}})+\epsilon (x)\right.}\\[0.3em]&\implies {}&f(x)&=f({\tilde {x}})+\varphi (x)\cdot (x-{\tilde {x}})\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8687b6114178763448e91342a6a076c7d6e9b1d9)

![{\displaystyle {\begin{aligned}f(x)&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\delta (x)\\[0.3em]&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+{\tfrac {\delta (x)(x-{\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&=f({\tilde {x}})+\left(f'({\tilde {x}})+{\tfrac {\delta (x)}{x-{\tilde {x}}}}\right)\cdot (x-{\tilde {x}})\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/45776e0914fee6a07c7f11182c1c93ee9de2b3e5)

![{\displaystyle {\begin{aligned}f(x)&=f({\tilde {x}})+\varphi (x)\cdot (x-{\tilde {x}})\\[0.3em]&=f({\tilde {x}})+(\varphi (x)+\varphi ({\tilde {x}})-\varphi ({\tilde {x}}))\cdot (x-{\tilde {x}})\\[0.3em]&=f({\tilde {x}})+\varphi ({\tilde {x}})\cdot (x-{\tilde {x}})+(\varphi (x)-\varphi ({\tilde {x}}))\cdot (x-{\tilde {x}})\\[0.3em]&=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+(\varphi (x)-\varphi ({\tilde {x}}))\cdot (x-{\tilde {x}})\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a2131c11be30c781372dc249e141f4ffda7fd86f)

![{\displaystyle {\begin{aligned}{\frac {g(x)-g({\tilde {x}})}{x-{\tilde {x}}}}&={\frac {(mx+b)-(m{\tilde {x}}+b)}{x-{\tilde {x}}}}\\[0.3em]&={\frac {mx-m{\tilde {x}}}{x-{\tilde {x}}}}\\[0.3em]&={\frac {m(x-{\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&=m\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3cfc5858ba6512366ee926f8f380469553180b35)

![{\displaystyle {\begin{aligned}g'({\tilde {x}})&=\lim _{x\to {\tilde {x}}}{\frac {g(x)-g({\tilde {x}})}{x-{\tilde {x}}}}\\[0.3em]&{\color {OliveGreen}\left\downarrow \ {\text{see calculation above}}\right.}\\[0.3em]&=\lim _{x\to {\tilde {x}}}m=m\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c2534b700fd877338dafc7e3a22948b341fd0bbc)

![{\displaystyle {\begin{aligned}f'(3)&=\lim _{h\to 0}{\frac {f(3+h)-f(3)}{h}}=\lim _{h\to 0}{\frac {(3+h)^{2}-3^{2}}{h}}\\[0.3em]&=\lim _{h\to 0}{\frac {9+6h+h^{2}-9}{h}}=\lim _{h\to 0}{\frac {6h+h^{2}}{h}}=\lim _{h\to 0}{(6+h)}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cb6c7566af2b644ee0070166593c8e8ce26c7d32)

![{\displaystyle {\begin{aligned}f'({\tilde {x}})&=\lim _{h\to 0}{\frac {f({\tilde {x}}+h)-f({\tilde {x}})}{h}}=\lim _{h\to 0}{\frac {({\tilde {x}}+h)^{2}-{\tilde {x}}^{2}}{h}}\\[0.3em]&=\lim _{h\to 0}{\frac {{\tilde {x}}^{2}+2{\tilde {x}}h+h^{2}-{\tilde {x}}^{2}}{h}}=\lim _{h\to 0}{\frac {2{\tilde {x}}h+h^{2}}{h}}\\[0.3em]&=\lim _{h\to 0}{(2{\tilde {x}}+h)}=2{\tilde {x}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f052a60ffcabe05eebbfc4ddfb556284f3eae7c2)

![{\displaystyle {\begin{aligned}\lim _{n\rightarrow \infty }{\frac {f(x_{n})-f(x_{0})}{x_{n}-x_{0}}}&=\lim _{n\rightarrow \infty }{\frac {|{\frac {1}{n}}|-|0|}{{\frac {1}{n}}-0}}=\lim _{n\rightarrow \infty }{\frac {\frac {1}{n}}{\frac {1}{n}}}\\[0.3em]&=\lim _{n\rightarrow \infty }{1}=1\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d0f6d1508fa7390e0d02874b6c812359c7a19619)

![{\displaystyle {\begin{aligned}\lim _{n\rightarrow \infty }{\frac {f({\tilde {x}}_{n})-f(x_{0})}{{\tilde {x}}_{n}-x_{0}}}&=\lim _{n\rightarrow \infty }{\frac {|-{\tfrac {1}{n}}|-|0|}{-{\tfrac {1}{n}}-0}}=\lim _{n\rightarrow \infty }{\frac {\tfrac {1}{n}}{-{\tfrac {1}{n}}}}\\[0.3em]&=\lim _{n\rightarrow \infty }{-1}=-1\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/86be70df4e44a50d24d254c9e5afbca72df306ba)

![{\displaystyle {\begin{aligned}\lim _{n\rightarrow \infty }{\frac {f({\hat {x}}_{n})-f(x_{0})}{{\hat {x}}_{n}-x_{0}}}&=\lim _{n\rightarrow \infty }{\frac {|(-1)^{n}{\tfrac {1}{n}}|-|0|}{(-1)^{n}{\tfrac {1}{n}}-0}}\\[0.3em]&=\lim _{n\rightarrow \infty }{\frac {\tfrac {1}{n}}{(-1)^{n}{\tfrac {1}{n}}}}=\lim _{n\rightarrow \infty }{(-1)^{n}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9538f68a6fc3330f2ed5cb86fa2920f209ee2fe6)

![{\displaystyle {\begin{aligned}{f_{+}}'(0)&=\lim _{x\downarrow 0}{\frac {f(x)-f(0)}{x-0}}=\lim _{x\downarrow 0}{\frac {|x|-|0|}{x}}\\[0.3em]&\ {\color {OliveGreen}\left\downarrow \ x>0\implies |x|=x\right.}\\[0.3em]&=\lim _{x\downarrow 0}{\frac {x-0}{x}}=\lim _{x\downarrow 0}1=1\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/050e452f972119883e0d82aecf009cafa1aaeb05)

![{\displaystyle {\begin{aligned}{f_{-}}'(0)&=\lim _{x\uparrow 0}{\frac {f(x)-f(0)}{x-0}}=\lim _{x\uparrow 0}{\frac {|x|-|0|}{x}}\\[0.3em]&\ {\color {OliveGreen}\left\downarrow \ x<0\implies |x|=-x\right.}\\[0.3em]&=\lim _{x\uparrow 0}{\frac {-x-0}{x}}=\lim _{x\uparrow 0}-1=-1\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3022cc131313897b1251dc2d4641b47eb1a8dab6)

![{\displaystyle {\begin{aligned}&\lim _{n\to \infty }{f(x_{n})}\\[0.3em]&{\color {OliveGreen}\left\downarrow \ f(x)=f({\tilde {x}})+f'({\tilde {x}})\cdot (x-{\tilde {x}})+\delta (x)\right.}\\[0.3em]=\ &\lim _{n\to \infty }{f({\tilde {x}})+f'({\tilde {x}})\cdot (x_{n}-{\tilde {x}})+\delta (x_{n})}\\[0.3em]&{\color {OliveGreen}\left\downarrow \ {\text{pull appart the limit}}\right.}\\[0.3em]=\ &\lim _{n\to \infty }\underbrace {f({\tilde {x}})} _{\to f({\tilde {x}})}+\lim _{n\to \infty }f'({\tilde {x}})\cdot \underbrace {(x_{n}-{\tilde {x}})} _{\to 0}+\lim _{n\to \infty }\underbrace {\delta (x_{n})} _{\to 0}\\[0.3em]=\ &f({\tilde {x}})+0+0\\[0.3em]=\ &f({\tilde {x}})\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c840df1d8449d97a03d16d3c9b28d152090f49f7)

![{\displaystyle {\begin{aligned}&\lim _{n\rightarrow \infty }{f(x_{n})-f({\tilde {x}})}\\[0.3em]&{\color {OliveGreen}\left\downarrow \ \forall n\in \mathbb {N} :x_{n}-{\tilde {x}}\neq 0\right.}\\[0.3em]=\ &\lim _{n\rightarrow \infty }{\frac {(f(x_{n})-f({\tilde {x}}))\cdot (x_{n}-{\tilde {x}})}{x_{n}-{\tilde {x}}}}\\[0.3em]&{\color {OliveGreen}\left\downarrow \ {\text{pull appart the limit}}\right.}\\[0.3em]=\ &\lim _{n\rightarrow \infty }\underbrace {\frac {f(x_{n})-f({\tilde {x}})}{x_{n}-{\tilde {x}}}} _{\to f'({\tilde {x}})}\cdot \lim _{n\rightarrow \infty }\underbrace {(x_{n}-{\tilde {x}})} _{\to 0}\ \\[0.3em]=\ &f'({\tilde {x}})\cdot 0\\[0.3em]=\ &0\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/134d276767b72617ae71f7fc8b5e71e32cc0b3c1)

![{\displaystyle f'(0)=\lim _{h\to 0}{\frac {h^{2}\sin \left({\frac {1}{h}}\right)-0}{h}}=\lim _{h\to 0}\underbrace {h} _{\to 0}\cdot \underbrace {\sin \left({\frac {1}{h}}\right)} _{\in [-1,1]}=0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b60efaea08b799eeb61b5534b5178ad7491f0929)

![{\displaystyle {\begin{aligned}f'({\tilde {x}})&=\left(x^{2}\cdot \sin \left({\frac {1}{x}}\right)\right)'\\[0.3em]&=2x\sin \left({\frac {1}{x}}\right)+x^{2}\cos \left({\frac {1}{x}}\right)\left(-{\frac {1}{x^{2}}}\right)\\[0.3em]&=2x\cdot \sin \left({\frac {1}{x}}\right)-\cos \left({\frac {1}{x}}\right)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3c057129bebea2f9f216e3d8513b5ec490421e17)

![{\displaystyle {\begin{aligned}\lim _{n\to \infty }f'(x_{n})&=\lim _{n\to \infty }\left(2x_{n}\cdot \sin \left({\frac {1}{x_{n}}}\right)-\cos \left({\frac {1}{x_{n}}}\right)\right)\\[0.3em]&=\lim _{n\to \infty }\left(2{\frac {1}{n\pi }}\cdot \sin(n\pi )-\cos(n\pi )\right)\\[0.3em]&=\lim _{n\to \infty }\left(2{\frac {1}{n\pi }}\cdot 0-(-1)^{n}\right)\\[0.3em]&=\lim _{n\to \infty }-(-1)^{n}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/16313c2244bfb36d7df8066627ff5b96099c1692)

![{\displaystyle {\begin{aligned}g'(2)&=\lim _{h\to 0}{\frac {g(2+h)-g(2)}{h}}=\lim _{h\to 0}{\frac {{\frac {1}{2+h}}-{\frac {1}{2}}}{h}}\\[0.3em]&=\lim _{h\to 0}{\frac {\frac {2-(2+h)}{2\cdot (2+h)}}{h}}=\lim _{h\to 0}{\frac {-h}{2h(h+2)}}\\[0.3em]&=\lim _{h\to 0}{\frac {-1}{2h+4}}={\frac {-1}{0+4}}=-{\frac {1}{4}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/60333530450384e7d5cbd79bc8c481ea4e63ef4c)

![{\displaystyle {\begin{aligned}g'({\tilde {x}})&=\lim _{h\to 0}{\frac {g({\tilde {x}}+h)-g({\tilde {x}})}{h}}=\lim _{h\to 0}{\frac {{\frac {1}{{\tilde {x}}+h}}-{\frac {1}{\tilde {x}}}}{h}}\\[0.3em]&=\lim _{h\to 0}{\frac {\frac {{\tilde {x}}-({\tilde {x}}+h)}{{\tilde {x}}\cdot ({\tilde {x}}+h)}}{h}}=\lim _{h\to 0}{\frac {-h}{{\tilde {x}}h(h+{\tilde {x}})}}\\[0.3em]&=\lim _{h\to 0}{\frac {-1}{{\tilde {x}}h+{\tilde {x}}^{2}}}={\frac {-1}{0+{\tilde {x}}^{2}}}=-{\frac {1}{{\tilde {x}}^{2}}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e8e52958275ae63676fe78f6b3798d2d7adb6466)

![{\displaystyle {\begin{aligned}\lim _{h\to 0}{\frac {f(a-h)-f(a)}{h}}&{\overset {{\tilde {h}}=-h}{=}}\lim _{{\tilde {h}}\to 0}{\frac {f(a+{\tilde {h}})-f(a)}{-{\tilde {h}}}}\\[0.3em]&=-\lim _{{\tilde {h}}\to 0}{\frac {f(a+{\tilde {h}})-f(a)}{\tilde {h}}}=-f'(a)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dc162e6dad7e471b15cc32039012acc573ff3640)

![{\displaystyle {\begin{aligned}\lim _{h\to 0}{\frac {f(a+h)-f(a-h)}{2h}}&=\lim _{h\to 0}{\frac {f(a+h)-f(a)-(f(a-h)-f(a))}{2h}}\\[0.3em]&={\frac {1}{2}}\cdot \underbrace {\lim _{h\to 0}{\frac {f(a+h)-f(a)}{h}}} _{=f'(a)}-{\frac {1}{2}}\cdot \underbrace {\lim _{h\to 0}{\frac {f(a-h)-f(a)}{h}}} _{=-f'(a)}\\[0.3em]&={\frac {1}{2}}f'(a)+{\frac {1}{2}}f'(a)=f'(a)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cf1a3274cd70bd5596aab459a0741f5ac2f75bbe)

![{\displaystyle {\begin{aligned}\lim _{h\to 0}{\frac {f(0+h)-f(0-h)}{h}}&=\lim _{h\to 0}{\frac {|h|-|-h|}{h}}\\[0.3em]&=\lim _{h\to 0}{\frac {|h|-|h|}{h}}=\lim _{h\to 0}{\frac {0}{h}}=0\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/af58842e2b9c1b30c0486128fbcc4644e92c3974)