Exercises: Continuity – Serlo

Lipschitz continuous functions are uniformly continuous

[Bearbeiten]Exercise

Let be Lipschitz continuous with Lipschitz constant . That is

for all . Prove that is uniformly continuous.

How to get to the proof?

We need to show that for all , there is a , such that for all with there is . By our assumption, we have

In order for to hold, it suffices to have . We can reach this by taking .

Proof

Let be arbitrary. We choose . Then, for all with :

Continuity at the origin

[Bearbeiten]Exercise

Prove that the following function is continuous at the origin :

with a real number

How to get to the proof?

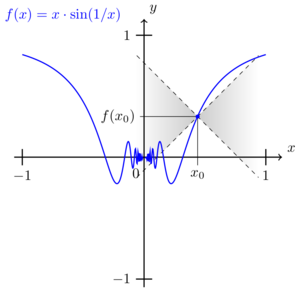

In order to establish continuity at the origin , we make use of the epsilon-delta criterion. That means, for all we have to find a such that the inequality holds at all with . The question now is how to find a suitable for any given . To answer this question, we take a look at the function around :

Since at , there is

This inequality also holds at , since . Visually, the inequality above means that the graph of the function fits inside the "double wedge" given by , where tells us, how much the wedge is "stretched" in -direction. So if we choose , then at any with , there is

Which we can use to carry through the proof (see below).

Note: We can also "move the double wedge" to any when investigating continuity at . If the graph fits inside the "moved double wedge" , then for any , there is

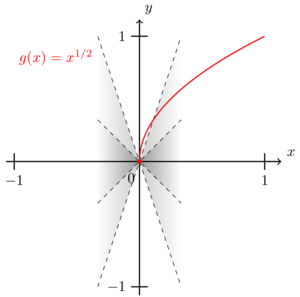

So any function fitting in such a double wedge is continuous. The converse does not hold true. There are functions which do not fit in any double wedge around but are continuous at . An example is the square root function around .

-

The function is continuous at .

-

The function is continuous at any .

-

The function is not Lipschitz-continuous at

Proof

Let . We choose . Let be any real number with . Then:

This shows that is continuous at .

Extreme value theorem

[Bearbeiten]Exercise (Maximum and minimum of a function)

Prove that the function defined on attains a maximum, but not a minimum.

Solution (Maximum and minimum of a function)

Proof step: attains a maximum

The function is continuous on , since it is composed by continuous functions and the denominator is strictly positive (). The enumerator is also strictly positive, so for , , and

That means, there is an with for all . The extreme value theorem implies that attains a maximum on . One may even show that the maximum is even global. However, computing the maximum explicitly would require us to solve , which is quite a computational effort and can be even harder for other functions . The extreme value theorem just allowed us to quickly show that there is a maximum - and saved us from the tedious solution of .

Proof step: does not attain a minimum

There is on . And indeed, approaches 0 (see the previous proof step). But it does not attain 0. Since and by continuity, there can not be a minimum (if there was an attained minimum , then by , there would by for some , which is a contradiction ).

Exercise (How often is a value attained #1)

- Show that there is no continuous function , which attains each of its function values exactly twice.

- Is there a continuous function , which attains each of its function values exactly three times?

Exercise (How often is a value attained #2)

Let with . Show: There is no continuous function , which attains each of its function values exactly times.

Intermediate value theorem and zeros

[Bearbeiten]Exercise (Zero of a function)

Prove that the function

has exactly one zero inside the interval .

Solution (Zero of a function)

Proof step: has at least one zero

is continuous as it is composed by the continuous functions and . In addition,

and

By means of the intermediate value theorem, there must be an with .

Proof step: has exactly one zero

is strictly monotonously increasing on . The function is also monotonously increasing on , so is decreasing and again increasing. Hence there can be only one zero with , since a function with two zeros is never strictly monotonously increasing (it would either have to stay constant or go "down again" between and ).

Exercise (Solution of an equation)

Let with . Prove that the equation

Has at least three solutions.

Solution (Solution of an equation)

It is a powerful trick in mathematics, to transform the problem of finding solutions to as zeros of an auxiliary function (if , then and vice versa). In our case, the continuous auxiliary function is

When approaching and , this function goes to

and

Therefore, there must be two arguments with and ( is close to and close to ). By the intermediate value theorem, there must hence be a zero with . This zero is one solution of the above equation.

The same argument works between and . Since and , we can use the intermediate value theorem and get a zero with .This is the second solution we have been looking for.

The third solution follows by a similar argument. There is and . So the intermediate value theorem renders a with . The equation has therefore at least three solutions.

Exercise (Solution of an equation)

Let be continuous with . Prove that there is a with .

Solution (Solution of an equation)

We consider the following auxiliary function:

Finding a with now amounts to finding a zero of . Since is continuous, so is . In addition, at the endpoints of the interval, there is

and

Fall 1:

This means , or equivalently

So we have found a solution to .

Fall 2:

We will first consider the case . Since , there is . The intermediate value theorem now yields a with

This is a zero of and hence a desired solution for . The other case can be treated using exactly the same arguments.

So for any choice of , there is a with .

Exercise (Existence of exactly one zero)

Let be a natural number. We define the function . Prove that has exactly one positive zero.

Solution (Existence of exactly one zero)

We need to show two things: At first, we need to show that a zero exists inside the interval . Second, we need to assure that there is indeed only one such zero.

The function is a polynomial function and hence continuous. At the beginning of the interval , there is i.e. the graph of the function runs below the -axis. At infinity, there is , meaning that for large , the graph runs above the -axis. As is continuous, we can apply the intermediate value theorem and get a zero .

Now we need to show that there is at most one zero. Both and are strictly monotonously increasing functions for . So we may assume that is also strictly monotonous, there. We can prove this assumption be taking the first derivative:

For there is: .

One may show with a bit of effort that differentiable functions with positive derivative are strictly monotonous in the sense that for . If there were two zeros , we would have although there is . This would contradict being monotonous and in hence excluded. Therefore, can have at most one zero (as all strictly monotonous functions).

Note: We could also prove that has at most one zero, only using that is differentiable with :

Assume that, the function would have two zeros with . Since the function is differentiable and , we may use Rolle's theorem and get that some exists wit . But this is a contradiction to the first derivative of being strictly positive . So this is a second way to exclude the existence of two zeros.

Continuity of the inverse function

[Bearbeiten]Exercise (Continuity of the inverse function 1)

Let be defined by

- Prove that is continuous, strictly monotonous and injective.

- Prove that ist surjectiive (so it is a 1-to-1-map from to an inverse function exists).

- Why is the inverse function continuous, monotonously increasing and bijective? Explicitly determine .

Solution (Continuity of the inverse function 1)

Part 1: is continuous on since it is the quotient of the continuous polynomials and . Note that for all .

Let with . Then, strict monotony holds:

Therefore, is also injective.

Part 2: The function runs towards infinity at the end points of the open interval as follows:

Since is continuous, the intermediate value theorem ensures that for each there is a mapped onto it: . Therefore, is also surjective: .

Part 3: Since is bijective, the inverse map exists and is bijective, as well:

The theorem about continuity of the inverse function tells us that is continuous and strictly monotonously increasing. Now, let us compute . That means, we need to bring into the form - i.e. we need to get standing alone on the left side of the equation:

Fall 1:

Fall 2:

We can use the quadratic solution formula in order to solve for :

Since for , the only reasonable solution is . Putting all together, the full definition for the inverse function reads

Hint

The distinction of two cases above is not very convenient. We can avoid it using a little trick: In case enumerator and denominator of can be multiplied by a factor of :

Plugging in , we get that is described correctly. So we can use the definition above for all and avoid the case distinction.

Exercise (Continuity of the inverse function 2)

Let

- Prove that is injective.

- Determine the range of all attained values.

- Why is the inverse function continuous?

Solution (Continuity of the inverse function 2)

Part 1:

is continuous, as it is composed by the continuous functions , , and on .

The logarithm is strictly monotonously increasing (and its inverse is decreasing): for with , there is:

Now, for . Since in addition, is strictly monotonously decreasing on , we have

So the -term is also strictly monotonously increasing and so is :

Therefore, is also injective.

Part 2:

At the ends of the domain of definition, there is

and

this implies

is continuous on the interval . Hence, we can use a corollary of the intermediate value theorem, and get that is again an interval. Since is strictly monotonously increasing and , we can conclude

Part 3:

Since is an interval and in bijective, we can use the theorem about continuity of the inverse function. I tells us that

is indeed continuous.

![{\displaystyle {\begin{aligned}\left|f(x)-f(0)\right|&=\left|x\cdot \sin \left({\frac {1}{x}}\right)-0\right|\\[0.3em]&=\left|x\cdot \sin \left({\frac {1}{x}}\right)\right|\\[0.3em]&\quad {\color {Gray}\left\downarrow \ |\sin(\cdot )|\in [0,1]\right.}\\[0.3em]&\leq |x|\\[0.3em]&\quad {\color {Gray}\left\downarrow \ {\text{by assumption }}|x|<\delta \right.}\\[0.3em]&<\delta \\[0.3em]&\quad {\color {Gray}\left\downarrow \ {\text{by choice }}\delta =\epsilon \right.}\\[0.3em]&=\epsilon \\[0.3em]\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0815bfbf1717b17fbed1ad1402fb922a4cf285dd)

![{\displaystyle [1,x_{0}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ffcdcb26a12ac1e3b6ca0a109ec4f018652fbf40)

![{\displaystyle f:[0,1]\to \mathbb {R} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/2de6d0d4c98d4ca7ad937c772dc3e3e914b062f5)

![{\displaystyle [0,{\tfrac {\pi }{2}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c406038f382a4125601d91fe4ee36262cbb60fef)

![{\displaystyle {\tilde {x}}\in [0,{\tfrac {\pi }{2}}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3f869afea7b1f1945f561443b546b3d575f5d844)

![{\displaystyle {\tilde {x}}\in [x_{1},x_{2}]\subset (a,b)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5a57df501598c92cf689fe28f87d2c94929d1fe6)

![{\displaystyle c\in [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/02b2796dc5e3cb6527d1ac6e766dc1da2ef1e120)

![{\displaystyle h:[0,{\tfrac {1}{2}}]\to \mathbb {R} ,\ h(c)=f(x+{\tfrac {1}{2}})-f(x)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ccbbf8f82c4ae0a8b4e72b5e736ec98dbb660658)

![{\displaystyle c\in [0,{\tfrac {1}{2}}]\subset [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3307974963566ede2262855b9d0f67d2d28c07b1)

![{\displaystyle ]0;+\infty [}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9a2b9afa8192cc3f8173fadddad0d1f5c499d881)

![{\displaystyle x_{1}\in ]0;+\infty [}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0a24e1b870ccab8c6a2e803072884fa1fce8dc97)

![{\displaystyle x_{1},x_{2}\in ]0;+\infty [}](https://wikimedia.org/api/rest_v1/media/math/render/svg/201c1c2873cefa352f4672a5eec3005f92682d7d)

![{\displaystyle \xi \in ]x_{1};x_{2}[}](https://wikimedia.org/api/rest_v1/media/math/render/svg/01e4a47cb998db4453b1bc10276c7464d42295f3)

![{\displaystyle f[(-1,1)]=\mathbb {R} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/4299fa67bbb0b7a07a258d93c9fbbea94ce02d29)

![{\displaystyle {\frac {1}{\ln(x+e)}}\in (0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c3d3def8a9b3bfb4541f13dc034ea7e9d58f67ad)

![{\displaystyle (0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7e70f9c241f9faa8e9fdda2e8b238e288807d7a4)

![{\displaystyle \lim _{x\to \infty }g(x)=\lim _{x\to \infty }[\underbrace {\ln(x+e)} _{\to \infty }-\underbrace {\cos \left({\frac {1}{\ln(x+e)}}\right)} _{\to \cos(0)=1}]=\infty }](https://wikimedia.org/api/rest_v1/media/math/render/svg/5eee71e2cf83f48c23ff3d16ea666ee30b7d9cf2)